Graham says that part of the reason Metaphysic wanted to go on the talent show was to raise public awareness about how good deepfakes are getting and to prod people to start abandoning the old idea that “seeing is believing.” Metaphysic is making money right now by creating deepfakes for Hollywood and the advertising industry. systems render the deepfake images fast enough to work well over real-time video. models handle different parts of a person’s face (for instance, one model might just work to perfect the eyebrow movements, and another the lips, etc.) But he also says that what makes live deepfakes like the ones Metaphysic is showcasing on America’s Got Talent possible are big advances in being able to have the A.I. model, but from a composite where different A.I. Graham says the improvement has come both from a method Metaphysic has used in which the deepfakes are not created from a single A.I. Tom Graham, the Australian lawyer-turned-cryptocurrency-investor who is Ume’s business partner in Metaphysic, tells me that there has been, in his view, a 20-fold increase in the quality of the deepfakes his company can create in just the past twelve months. I was wrong,” Hany Farid, a computer scientist at the University of California at Berkeley who is one of the world’s foremost experts on digital image authentication, tells me. “I remember someone asking me about live deepfakes about two years ago, and I said this is going to take about five years. In fact, it’s getting better so quickly that even those who have been monitoring the field closely have been surprised by its rapid advance. But software is freely available on the internet to allow someone with almost no technical skill to produce a not-half-bad live deepfake. That’s because the creative genius behind Metaphysic is none other than Chris Ume, one of the world’s top deepfake artists (he produced those viral Tom Cruise deepfakes that broke the internet 18 months ago.) Ume prides himself on creating deepfakes that are as flawless as possible and sweats every wrinkle and micro-gesture he is trying to replicate.

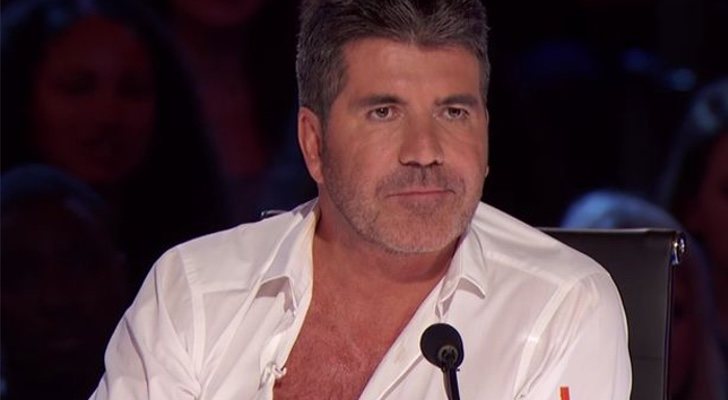

Now, that’s not to say all live deepfakes are as good as the Cowell deepfake on America’s Got Talent. Believable deepfakes can be deployed on a live video transmission. models that created the deepfake couldn’t be run fast enough to produce the deepfake reliably in real-time over a broadcast video. What’s more, to get the details right-particularly around the mouth and eyes and jawline-so that the deepfake was really convincing, took a fair bit of post-production work. Two years ago, most deepfake software couldn’t create a convincing likeliness of someone without a lot of images of the deepfake target-which is why celebrities were often used for deepfakes, since plenty of photos of them from a variety of angles are readily available. The judges have been blown-away by seeing performers who have only the vaguest resemblance to them-a somewhat similar face and body shape-suddenly transform into their digital doppelgangers, right before their eyes. A startup called Metaphysic has managed to advance to the talent competition’s final round, which will air next week, by producing remarkable deepfakes of Simon Cowell and the other contest judges in real-time. If there were any doubt about it, this season’s America’s Got Talent should serve as a wakeup call.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed